How to determine true audio quality of streaming audio

A couple years ago we wrote an article on how to determine the true bitrate of any audio file, as well as why converting YouTube to 320kbps MP3 is a waste of time. Our aim was to help users determine the true audio quality of music files they have paid for and downloaded, to avoid music services that claim to offer high-quality lossless audio, but are serving up MP3s converted into FLAC, for example.

Many users also asked how they can determine the true audio quality of streaming music, rather than local files. This is a great question, as many HiFi streaming services have sprung up in recent years, claiming to offer high quality lossless music streams to their users.

You might think you’d be able to just record the streaming audio, save it locally as a .WAV file, and run it through a spectrum analyzer like Spek. This can’t be done. It is impossible to capture bit perfect audio from a streaming source using your regular motherboard soundcard.

So what we need to do is use a nearly perfect accurate spectrum analyzer for streaming audio and understand how to read it in real-time.

Requirements:

- MusicScope software

- Streaming audio service of your choice

MusicScope is a real-time audio analyzer and measuring tool which can deliver very precise feedback on streaming audio. Unfortunately, the developers stopped selling licenses for the software, but the trial version allows you to test up to 30 seconds of audio.

For the purpose of this guide, we are going to give examples of using the software with local files in different formats. However, all of the information given can equally apply to streaming audio, such as from Spotify, Deezer, etc.

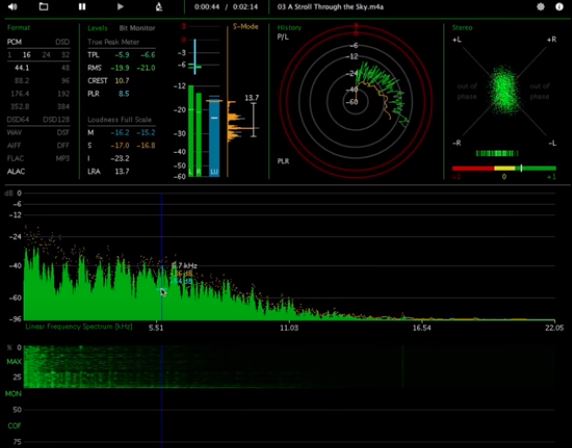

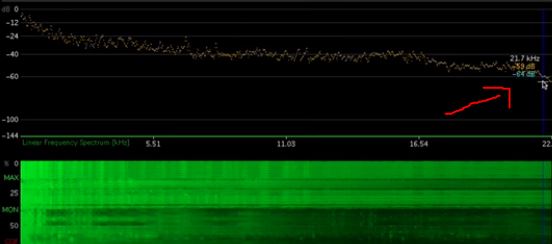

Determining frequency and LRA loudness range

Let’s try a lossless (.M4A ALAC) track “A Stroll Through the Sky” from the film Howl’s Moving Castle. It’s an orchestral recording, so we should get a nice sample of all frequency ranges. For example, we can see isolated high-frequency peaks like cymbal shimmers between 11 to 22 kHz.

As we watch the graphs in MusicScope, we can see that there is a very high dynamic range, as we would expect from an orchestral recording.

What MusicScope can also give us is the LRA (loudness range), which measures contrast between the softest and loudest frequencies. For this specific track, we can see there’s a difference of about 23 decibels between the softest and loudest passages.

In terms of microdynamics, this specific track has a very high dynamic range, which we would expect from a high-quality orchestral recording, but there’s also a few interesting things happening.

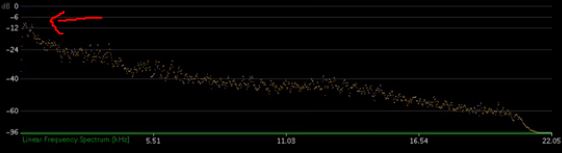

MusicScope can tell us if a track would benefit from being mastered at a higher resolution. So this track in particular is recorded with a 16-bit depth at a 44 kHz sample rate. But we can tell the track has a lot of headroom. From 0 to 6 decibels below full-scale, there’s no data in the Linear Frequency Spectrum.

So this track has an effective bitrate of only around 14 to 15 bits, which means they could’ve applied dynamic range compression during master recording, or the microphones used during recording did not pick up all the information.

So even if there was a 96 kHz version of this file, it wouldn’t benefit, because it’s most likely that the microphones used during recording did not pick up all the data. This is because most microphones are designed to map to frequencies of human hearing range, so in all honesty, a 96 kHz / 24-bit recording of this track wouldn’t really offer any noticeable difference.

The takeaway from this is that in order to improve audio quality, we focus on what happens during the recording and mastering stage. An over-focus on “high resolution” audio files for the sake of high resolution files distracts us from what really matters, which is the recording equipment and process used.

How to know if a song could have a better audio version

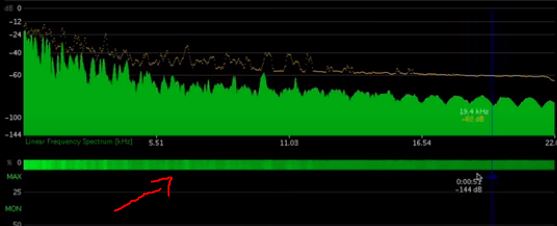

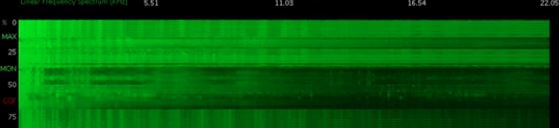

Let’s try using an EDM track, ‘Zebra’ from Oneohtrix Point Never in 24-bit 44 kHz format. What’s interesting about this particular track is just the sheer density of the musical information in this track. You can see on the spectrogram a solid green block, and watch it fill up throughout the track.

This track has an LRA of about 12.9, which is pretty high for an EDM track. What’s interesting here is that you can see it’s a 24-bit tracking that uses nearly the entire 24 bits of the dynamic range. The softest music in this recording is around 100 dB below the loudest noise.

So you can tell just looking at the spectrogram that this track is cut off at 22 khz, it’s a really hard cutoff, and the high-frequency peaks at around 22 kHz are only about 60 decibels below full-scale.

This means that if we had a 96 kHz version of this track, there would probably be plenty of information left over above 22 kHz, which did not make it into this version of the track.

To put it simply, your listening experience would conceivably benefit from a higher resolution version of this track. This track reaches the limits of its format (the 44 kHz sample rate). Once you understand the thinking process here, you can truly begin to understand if you’re being served the best possible version of a track on a hi-fi streaming service.

How to tell a bad quality audio recording

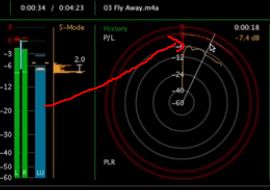

Let’s use the track “Fly Away” by TeddyLoid, in 16-bit 44 kHz format. We can hear immediately that the track was hotly mastered.

By looking at the radar graph, we can see that the track continuously peaks the entire song duration, so it continuously clips against full-scale. So if you played this track through mid-range equipment, it would likely distort a lot.

Also this track has an LRA of around 2.3, which means there’s a spread of 2.3 decibels of dynamic range throughout this track, which seems pretty insane.

Bad quality or intentional production?

When considering a track like “Fly Away”, we also need to consider whether it is actually a badly mastered track like an amateur production, or if it was intentional. The track “Fly Away” was meant to be a sort of “disposable”, loud dance track. It sounds like it’s being played through bad speakers, which may in fact have been the intention behind the mastering of the track.

Think of it like camera filters. If you take a high-resolution selfie and apply a sepia filter and add some blur effect, for example. People might think you took a blurry, bad quality photo, but it was actually your intention. The same can happen in music production, such as intentionally bad “garage punk” music.

So to summarize. We can use MusicScope to determine all kinds of information about a music track, but we should also consider what was the artist’s intention, and whether or not a poor-quality mastering was actually a form of art, or something like that.

Hi, Tony. I’m thrilled to have found you, because it seems like YOU are doing the exact thing I’ve been searching for for YEARS! I am a MAC user, however, and this article does what I want in a way that I can’t do it. Can you help me with a Mac solution, please? Spek no longer works and MusicScore is an .exe file.

When I stream from my bandcamp account, say, am I getting hi quality audio or not? That is one site I use regularly, both to discover and listen to my purchased library through, however, I don’t know if what they stream is similar to what they offer for download. THANK YOU SO MUCH for being so AWESOME!