GPU Boost – Nvidia’s Self Boosting Algorithm Explained

Graphics Card technologies have progressed by leaps and bounds over the last few generations with each generation bringing a substantial improvement not only in the overall performance of the cards but also in the features that the cards offer. It is no surprise that it is vital for both Nvidia and AMD to keep innovating and keep progressing the feature sets of their cards and the intrinsic technologies in them, along with the generational improvements in performance with each subsequent lineup of graphics cards.

Clock speed boosting has become a mainstream feature in the PC Hardware industry these days with both the graphics cards as well as the CPUs offering this technology. Varying the clock speeds of the component due to changes in the conditions of the PC can lead to highly improved performance as well as the efficiency of that part, which ultimately provides a much better user experience. However, due to rapid progression in this field, the standard boosting behavior of graphics cards has been further improved and refined with technologies like GPU Boost 4.0 coming to the forefront in 2020. These new technologies have been developed to maximize the performance of the graphics card when it is necessary while also maintaining peak efficiency under lighter loads.

GPU Boost

So what exactly is GPU Boost? Well, put simply, GPU Boost is Nvidia’s method of dynamically boosting clock speeds of the graphics cards until the cards hit a pre-determined power or temperature limit. The GPU Boost Algorithm is a highly specialized and conditionally aware algorithm that makes split-second changes to a large number of parameters to keep the graphics card at its maximum possible boost frequency. This technology allows the card to boost much higher than the advertised “Boost Clock” that may be listed on the box or on the product page.

Before we dive into the mechanism behind this technology, a few important terminologies need to be explained and differentiated.

Terminologies

While shopping for a graphics card the average consumer might come across a host of numbers and confusing terminologies that make little sense or even worse, end up contradicting each other and further confusing the shopper. Therefore, it is necessary to take a brief look at what the different clock speed-related terminologies mean when you’re looking at a product page.

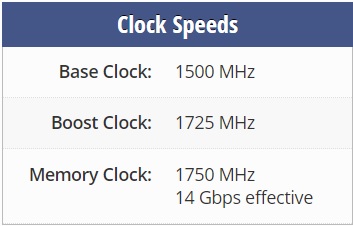

- Base Clock: The Base Clock of a graphics card (also sometimes referred to as the “Core Clock”) is the minimum speed at which the GPU is advertised to run. In normal conditions, the GPU of the card will not drop below this clock speed unless conditions are significantly altered. This number is more significant in older cards but is becoming less and less relevant as boosting technologies take center stage.

- Boost Clock: The advertised Boost Clock of the card is the maximum clock speed that the graphics card can achieve under normal conditions before the GPU Boost is activated. This clock speed number is generally quite a bit higher than the Base Clock and the card uses up most of its power budget to achieve this number. Unless the card is thermally constrained, it will hit this advertised boost clock. This is also the parameter that is altered in “Factory Overclocked” cards from AIB partners.

- “Game Clock”: With the release of AMD’s new RDNA architecture at E3 2019, AMD also announced a new concept known as Game Clock. This branding is exclusive to AMD graphics cards at the time of writing and actually gives a name to the arbitrary clock speeds one would see while gaming. Basically, Game Clock is the clock speed that the graphics card is supposed to hit and maintain while gaming, which is generally somewhere between Base Clock and Boost Clock for AMD Graphics Cards. Overclocking the card has a direct effect on this particular clock speed.

Mechanism of GPU Boost

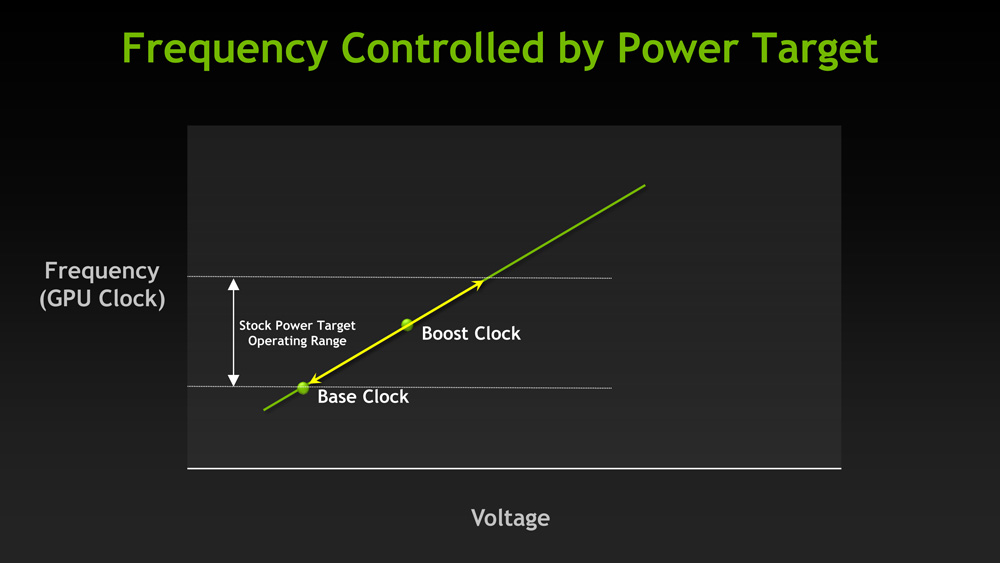

GPU Boost is an interesting technology that is quite beneficial to gamers and really has no significant disadvantage so to speak. GPU Boost increases the effective clock speed of the graphics card even beyond the advertised boost frequency, provided that certain conditions are favorable. What GPU Boost does is essentially overclocking, where it pushes the clock speed of the GPU beyond the advertised “Boost Clock”. This allows the graphics card to squeeze out more performance automatically and the user does not have to tweak anything at all. The algorithm is essentially “smart” due to the fact that it can make split-second changes to various parameters at once in order to keep the sustained clock speed as high as possible without the risk of crashing or artifacting etc. With GPU Boost, the graphics cards run higher-than-advertised clock speeds out of the box, which gives the user essentially an overclocked card without the need for any manual tuning.

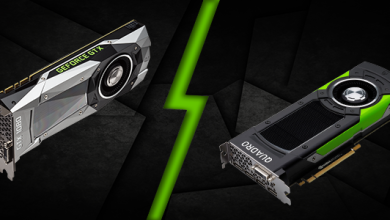

GPU Boost is mainly an Nvidia-specific branding and AMD has something similar that operates in a different way. In this content piece, we will be focused mainly on Nvidia’s implementation of GPU Boost. With its Turing lineup of graphics cards, Nvidia introduced the fourth iteration of GPU Boost called GPU Boost 4.0 that allowed the users to manually adjust the algorithms that GPU Boost uses should they see fit. This was not possible with GPU Boost 3.0 since these algorithms were locked inside the drivers. GPU Boost 4.0 on the other hand allows users to manually tweak various curves to increase performance, which will be good news to overclockers and enthusiasts.

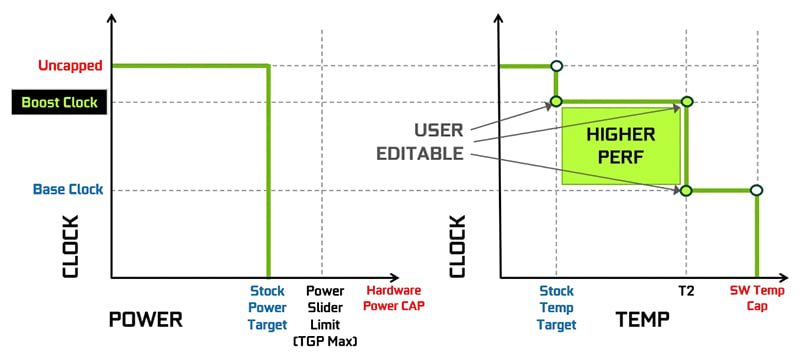

GPU Boost 4.0 has also added various other fine tweaks such as temperature domain where new inflection points have been added. Unlike GPU Boost 3.0 where there was a steep and sudden drop from boost clock down to base clock when a certain temperature threshold was crossed, now there can be multiple steps along the way between the two clock speeds. This allows a greater level of granularity which enables the GPU to squeeze even the last bit of performance under unfavorable conditions as well.

Overclocking the graphics cards with GPU boost is fairly straightforward and not much has changed in this regard. Any added offset to the core clock is actually applied to the “Boost Clock” and the GPU Boost algorithm tries to further improve the highest clock speed by a similar margin. Increasing the Power Limit slider to the maximum can help significantly in this regard. This makes stress testing the overclock a bit more complicated because the user has to keep an eye on the clock speeds as well as the temperatures, power draw, and voltage numbers, but our comprehensive stress-testing guide can help with that process.

Conditions for GPU Boost

Now that we have discussed the mechanism behind GPU Boost itself, it is important to discuss the conditions that need to be satisfied for GPU Boost to be effective. There are a large number of conditions that can have an effect on the final frequency that is achieved by GPU Boost, but there are three main conditions that have the most significant impact on this boosting behavior.

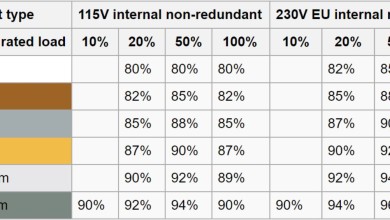

Power Headroom

GPU Boost will auto-overclock the card provided that enough power headroom is available to the card to allow for the higher clock speeds. It is understandable that higher clock speeds draw more power from the PSU, so it is extremely important that enough power be available to the graphics card so that GPU Boost can work properly. With most modern Nvidia graphics cards, GPU Boost will use up all of the available power that it can use to push the clock speeds as high as possible. This makes the Power Headroom the most common limiting factor to the GPU Boost algorithm.

Simply increasing the “Power Limit” slider to the maximum in any overclocking software can have a big impact on the final frequencies that are hit by the graphics card. The extra power that is afforded to the card is used to push the clock speed even higher, which is a testament to how much the GPU Boost algorithm depends on power headroom.

Voltage

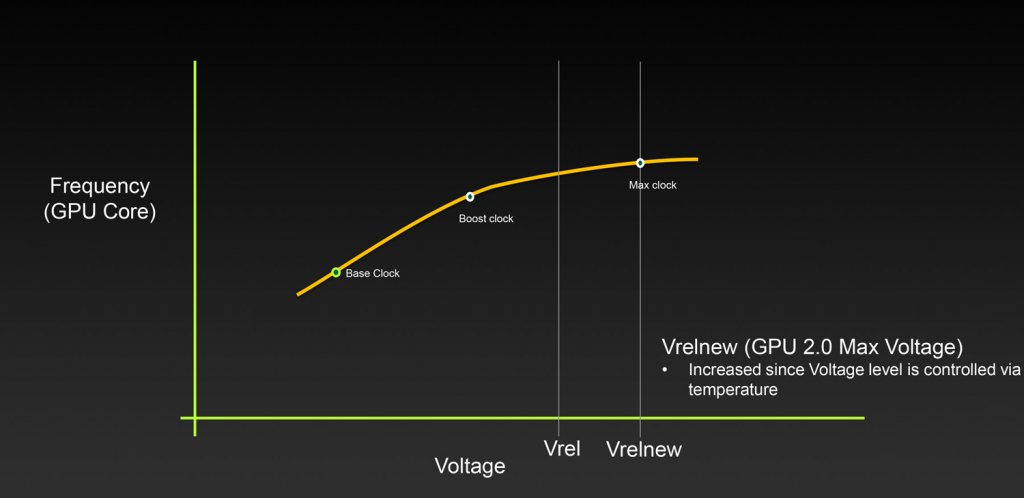

The graphics card’s power delivery system needs to be able to provide the additional voltage that is needed to hit and sustain the higher clock speeds. Voltage is a direct contributor to temperature as well so it ties into the thermal headroom condition as well. Regardless, there is a hard limit to how much voltage the card can use and that limit is set by the card’s BIOS. GPU Boost makes use of any voltage headroom to try and sustain the highest clock speed it possibly can.

Thermal Headroom

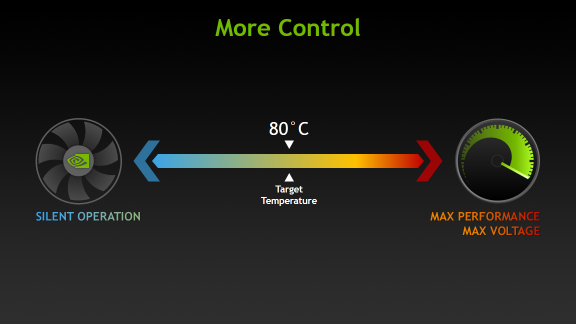

The third major condition that needs to be fulfilled for the effective operation of GPU Boost is the availability of adequate thermal headroom. GPU Boost is extremely sensitive to the temperature of the GPU as it increases and decreases the clock speed based on even the slightest changes in temperature. It is important to keep the temperature of the GPU as low as possible in order to achieve the highest clock speeds.

Temperatures higher than 75 Degrees Celcius start to drop the clock speed noticeably which can have an impact on performance. The clock speed at these temperatures will still likely be higher than the Boost Clock, however, it is not a great idea to leave performance on the table. Therefore, adequate case ventilation and a good cooling system on the GPU itself can have a significant impact on the clock speeds achieved through GPU Boost.

Boost Binning and Thermal Throttling

An interesting phenomenon that is intrinsic to the operation of GPU Boost is known as boost binning. We know that the GPU Boost algorithm rapidly changes the clock speed of the GPU depending on various factors. The clock speed is actually changed in blocks of 15 Mhz each, and these 15 Mhz portions of the clock speeds are known as boost bins. It can be easily observed that GPU Boost numbers will vary from each other by a factor of 15Mhz depending on power, voltage, and thermal headroom. This means that altering the underlying conditions can drop or increase the card’s clock speed by a factor of 15Mhz at a time.

The concept of thermal throttling is interesting to explore too with GPU Boost operation. The graphics card does not actually start thermal throttling until it reaches a set Temperature Limit known as Tjmax. This temperature usually corresponds to somewhere between 87-90 Degrees Celcius on the GPU Core and this specific number is determined by the GPU’s BIOS. When the GPU core reaches this set temperature, the clock speeds will drop gradually until they fall even below the base clock. This is a sure sign of thermal throttling as compared to regular boost binning which is done by GPU boost. The key difference between thermal throttling and boost binning is that thermal throttling occurs at or below the base clock, and boost binning alters the maximum clock speed that is achieved by GPU Boost using the temperature data.

Drawbacks

There are not many drawbacks to this technology which in-and-of-itself is a pretty bold thing to say about a graphics card feature. GPU Boost allows the card to increase its clock speeds automatically without any user input and unlocks the full potential of the card by providing additional performance at no extra cost to the user. However, there are a few things to keep in mind if you own an Nvidia graphics card with GPU Boost.

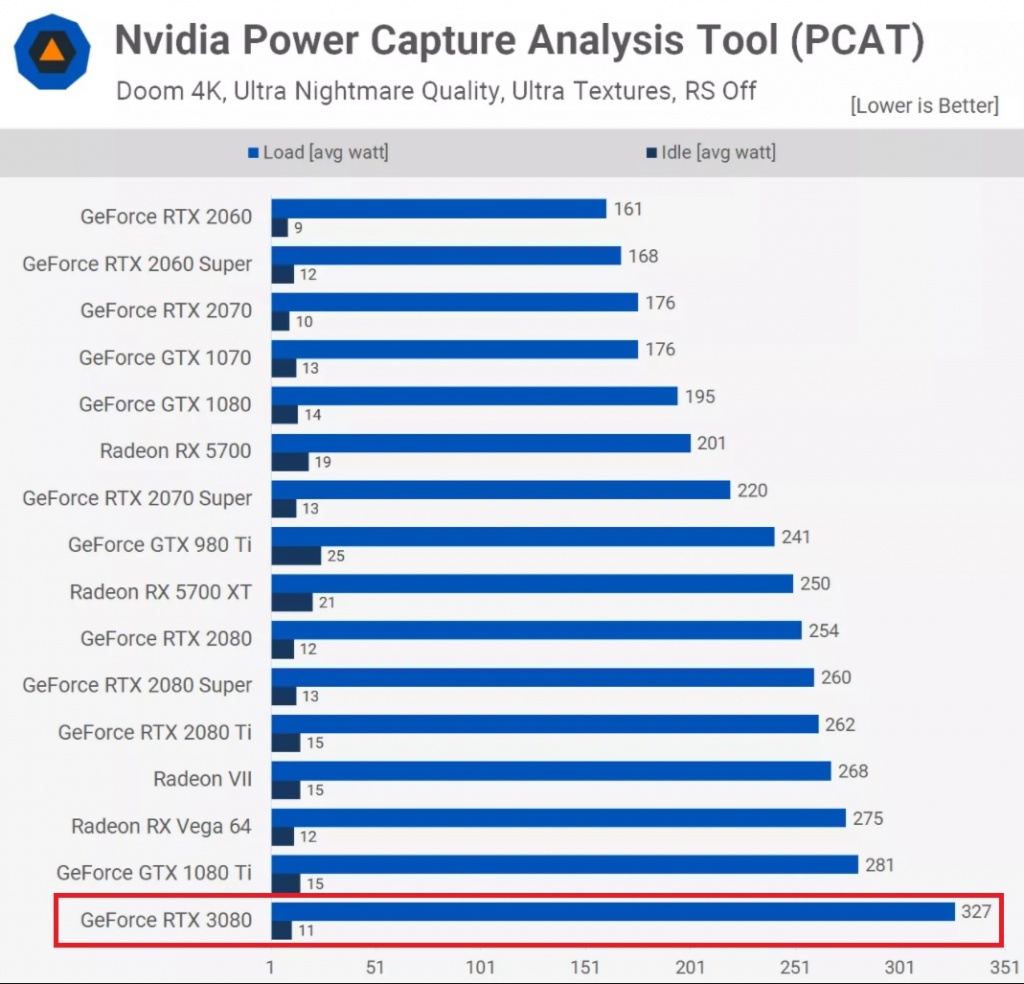

Due to the fact that the card uses the entire power budget allocated to it, the power draw numbers of the card will be higher than advertised TBP or TGP numbers might lead you to believe. In addition to that, the extra voltage and power draw will lead to higher temperatures due to the fact that the card is auto-overclocking by using the temperature headroom available to it. The temperatures will not get dangerously high by any means because as soon as the temperatures cross a certain limit, the voltage and power draw will be dropped to compensate for the extra heat.

Final Words

Rapid progress in graphics card technologies has seen some extremely impressive features make their way into the hand of consumers, and GPU Boost is certainly one of them. Nvidia’s feature (and AMD’s similar feature) allows the graphics cards to reach their maximum potential without the need for any user input in order to give the maximum out-of-the-box performance possible. This feature all but eliminates the need for manual overclocking since there is really not much headroom available for manual fine-tuning due to the excellent management of GPU Boost.

Overall, GPU Boost is an excellent feature that we’d like to see get better and better with improvements to the core algorithm behind this technology that micromanages the tiny adjustments to different parameters in order to get the best possible performance.