RTX 4080 Reviews Are Here, Fast But At What Cost

The reviews for NVIDIA’s brand-new RTX 4080 ’16GB’ have gone live with mixed reactions from various experts and reviewers. NVIDIA has seen its fair share of criticism from the community citing the insanely high Ada Lovelace prices. The RTX 4080 16GB despite offering a huge jump in performance over Ampere just adds fuel to the fire. Long gone have the ages of GPU mining, although NVIDIA still remains oblivious to this fact which is proven by their pricing strategy.

RTX 4080, High Performance But At What Cost

Going over the general specifications for the RTX 4080, we see the AD103-300-A1 GPU paired with 16GB of G6X memory. A 76SM count puts it slightly lower than the full AD103 GPU. Over a 256-bit memory bus, the 22.5Gbps of memory amounts to 720GB/s of effective bandwidth.

The FP32 Shaders stand at 9728 CUDA cores, almost equal to the RTX 3090 Ti. The rated TGP for this GPU is 320W, which goes as high as 516W. The pricing yet again is a question mark at $1199. Board partner variants with the added margins can see this number reach the $1600 territory without breaking a sweat.

Performance-wise, we have included various results from reviewers such as Hardware Unboxed and Linus Tech Tips for a short summary of where the RTX 4080 stands.

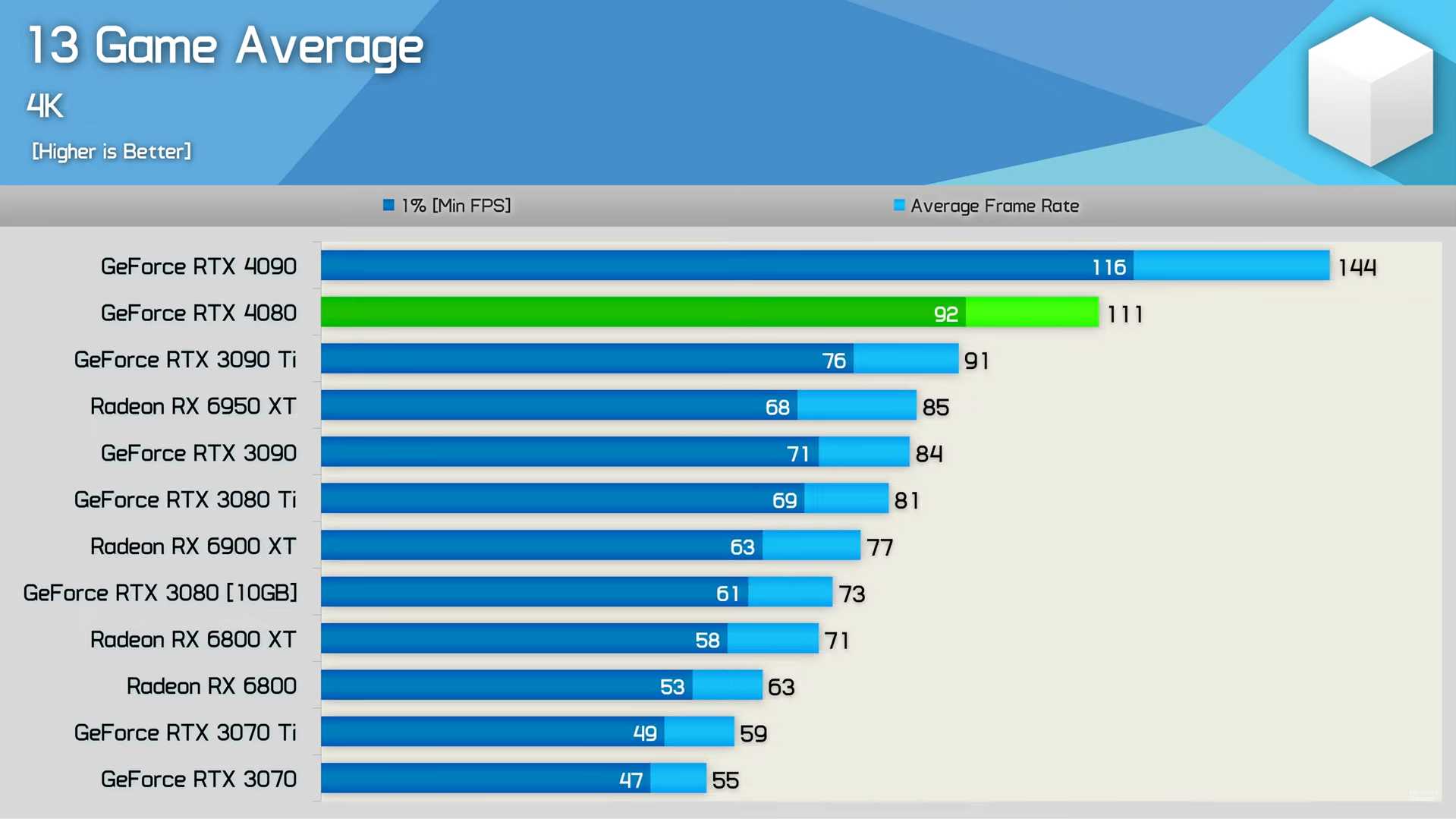

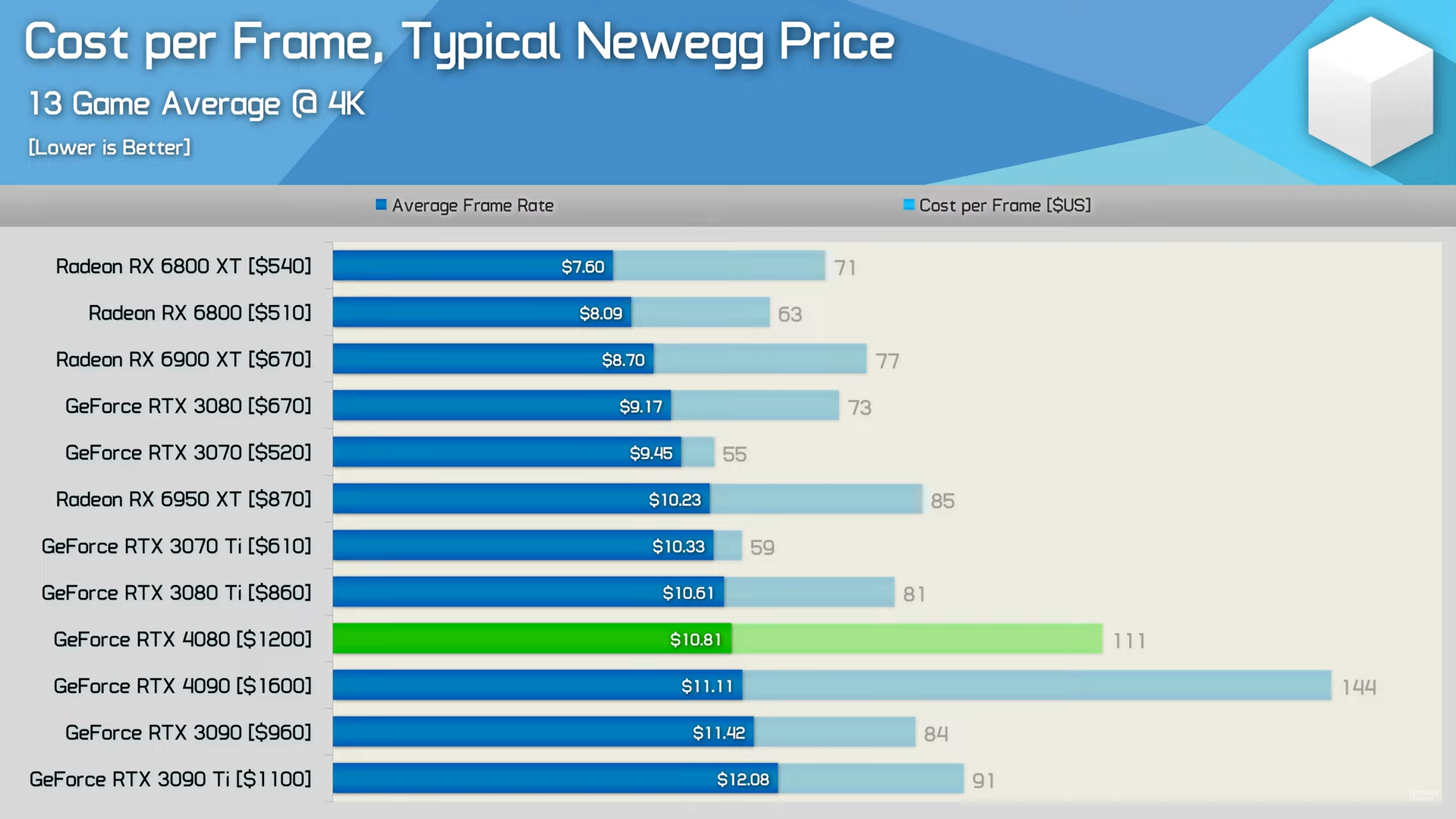

Starting off, over 13 games at 4K, the RTX 4080 manages to dethrone Ampere’s best by almost 22%. That is an impressive feat considering the wattage difference between the two. It does lose to the RTX 4090 and not by a small margin. The RTX 4080 is 70% of an RTX 4090 at 75% of the price.

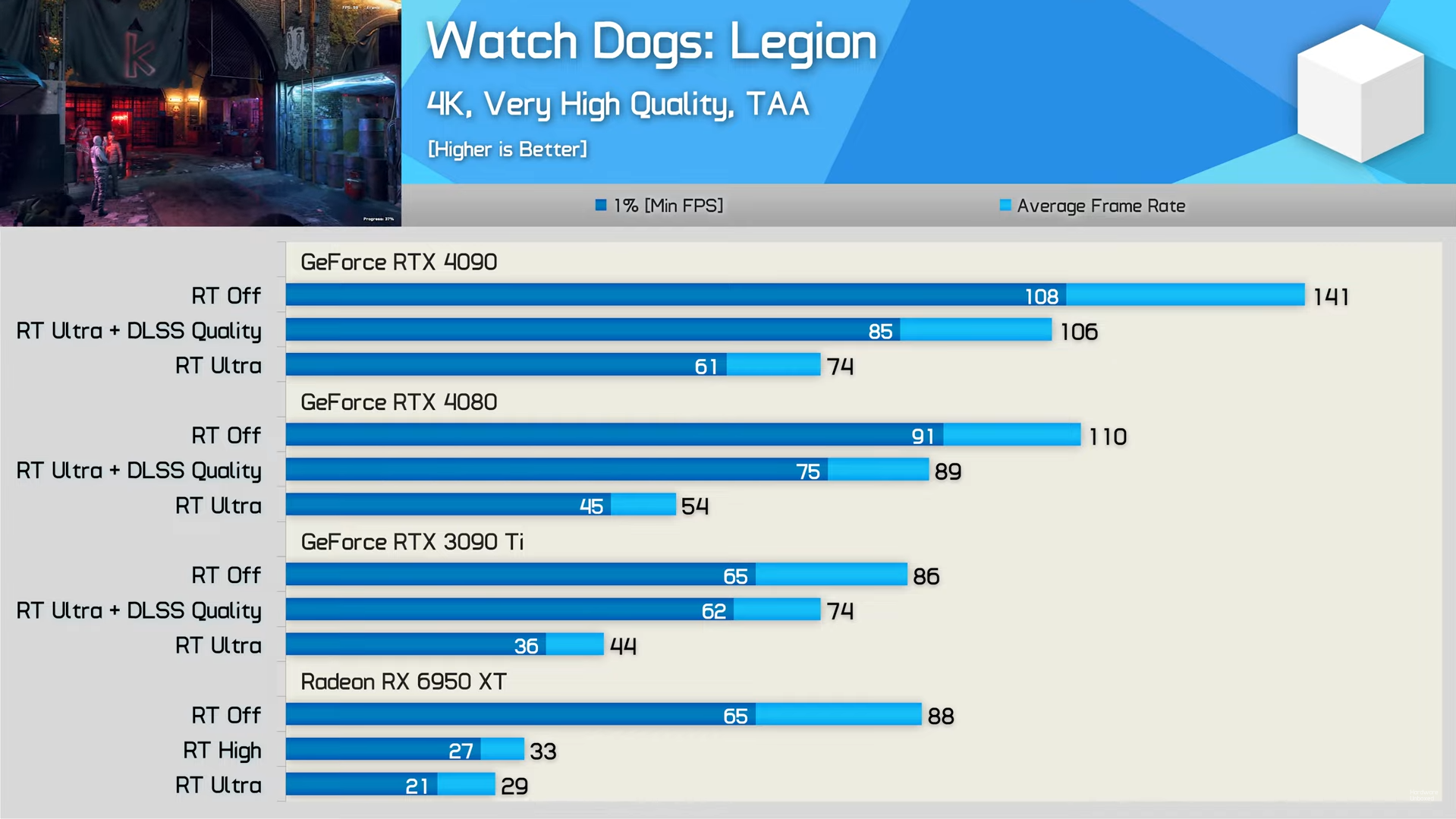

To showcase the ray-tracing improvements, we also have some more data courtesy of Hardware Unboxed. First up, in Watch Dogs: Legion at 4K, the RTX 4080 is 22% faster than the RTX 3090 Ti. AMD’s 6950XT is out of the question here due to lackluster ray-tracing performance. Against the RTX 4090, the 4080 is almost 27% slower in pure ray tracing.

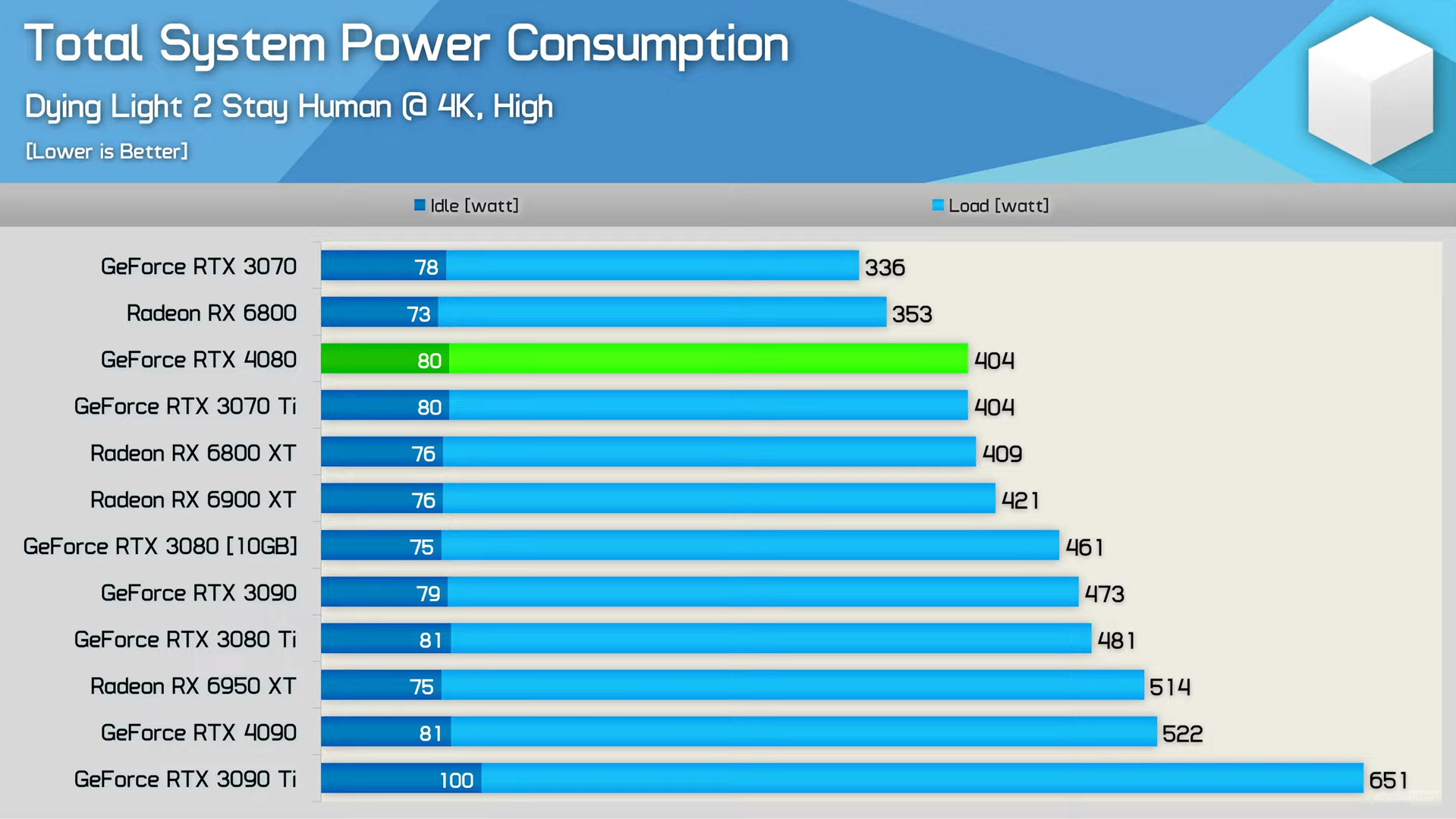

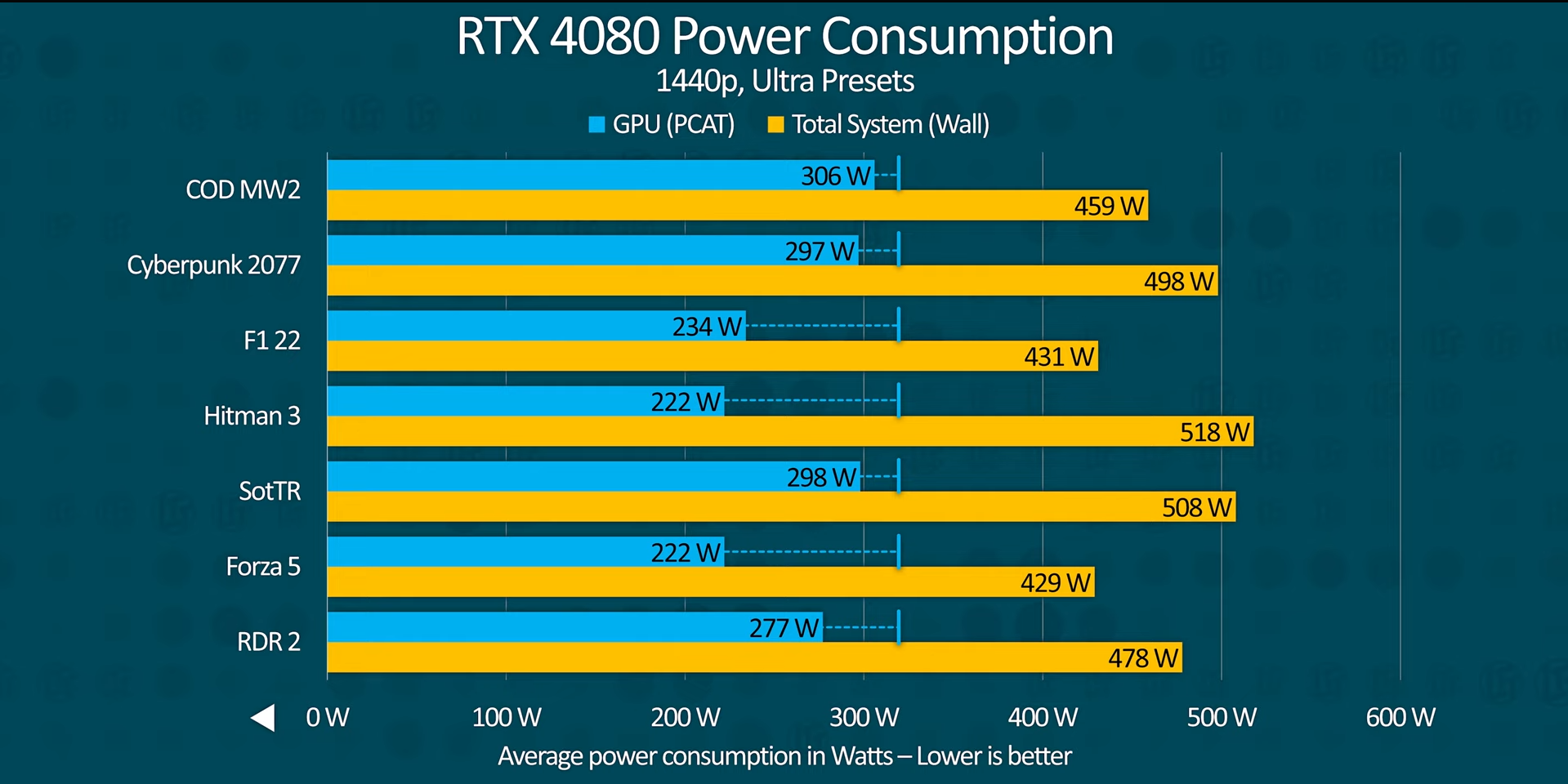

In Dying Light 2 at 4K High, the RTX 4080 equipped system consumes 404W, equal to the RTX 3070 Ti. The generation-on-generation efficiency improvements are massive considering the RTX 3090 Ti system is chugging up 651W of power and still remains slower than the RTX 4080.

A Productivity Champ

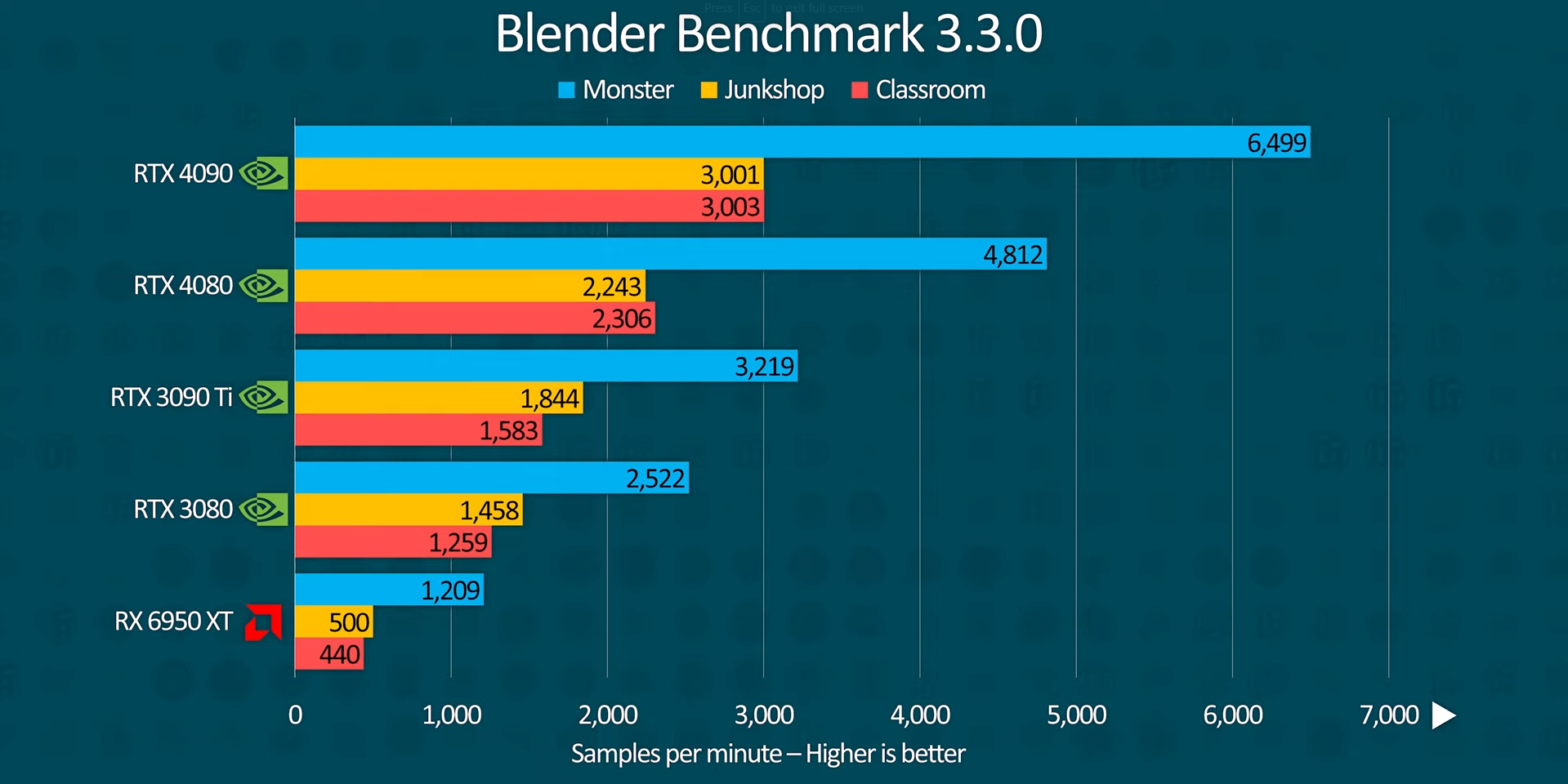

In the Blender Benchmark 3.3.0, the RTX 4080 flexes its muscles by surpassing the RTX 3090 Ti, and not by a small margin. 4,812 samples per minute make it 50% faster than the best Ampere offers.

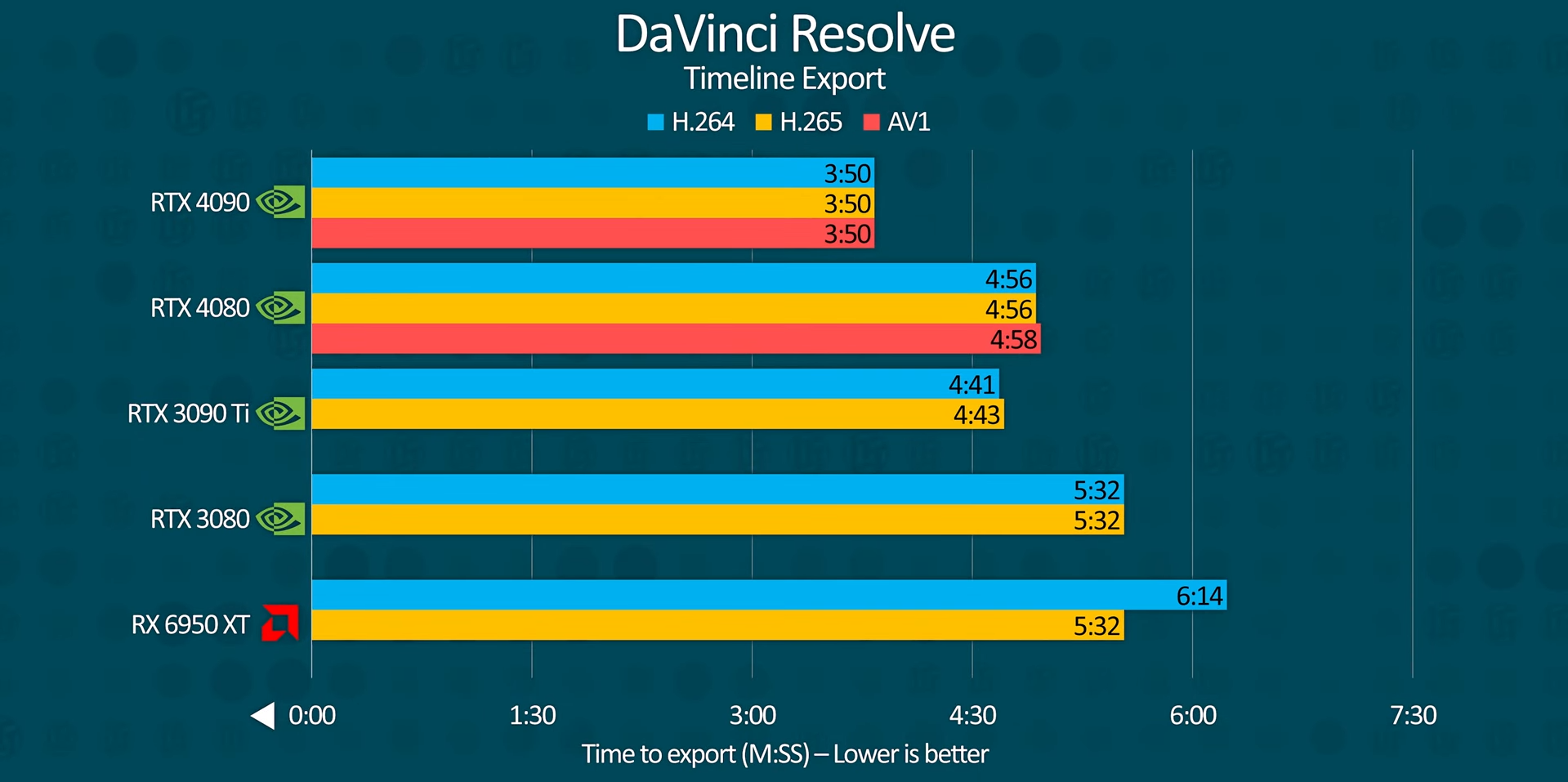

The RTX 4080 does lose to the RTX 3090 Ti in DaVinci Resolve although the inclusion of the AV1 encoder/decoder makes it stand out amongst all other GPUs. Currently, only the RTX 4090 and the RTX 4080 (Arc included) can export using this codec which gives it a huge edge. Although, streamers should be aware that most websites such as YouTube and Twitch do not support this format (so far).

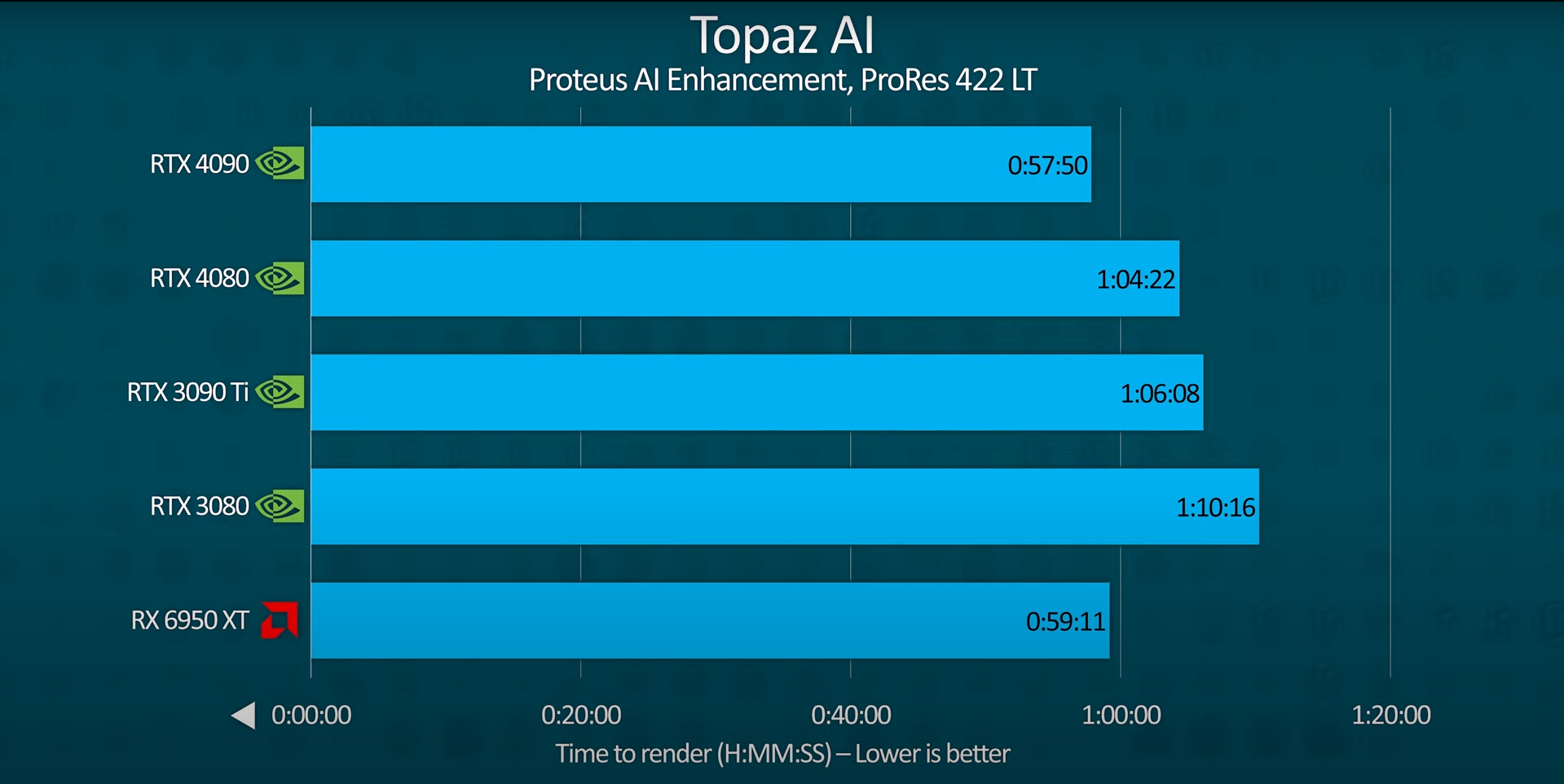

AMD surprisingly manages to beat the RTX 4080 with a last-gen product in Topaz AI. LTT assumes this is probably because Topaz is geared more towards raw compute power.

Insanely Efficient

One thing about the RTX 4080 is that it is extremely power efficient. Don’t believe us? Well here’s the word from NVIDIA themselves via LTT. TGP, or Total Graphics Power up until now was merely a power target or the power the GPU tried to consume when under load. NVIDIA has re-defined TGP in such a way that now it is a power limit. Meaning the RTX 4080 will almost always stay under its specified 320W TGP limit. Quite frankly, the chart below really does speak for itself.

Can It Compete Against RDNA3?

Despite being a massive improvement over Ampere, AMD’s RDNA3-based 7900XTX/XT are a major threat to the RTX 4080. The first thing anyone would point out is that the RTX 4080 is $200 more expensive than AMD’s best offering. Since we only have very few benchmarks related to the 7900XTX, we cannot draw a comparison right now.

Although, the 7900XTX is expected to be 20-25% slower than the RTX 4090 so in the >5% faster than RTX 4080 territory. The RX 7900 XT is a different story, quite possibly on par or slower than the RTX 4080. Even despite these small setbacks, AMD is offering much better value currently if MSRP prices stand at retail.

The ‘4080’ Disaster, And How It Could Have Been Avoided

For starters, Jensen Huang initially announced 2 different RTX 4080s at the Lovelace reveal. The difference between them was supposed to be minimal although specifications told otherwise. Basically, NVIDIA introduced a new chip namely ‘XX103’ for the 4080 16GB, which has not been seen before.

The RTX 4080 12GB on the other hand featured a much slower AD104. So under the high-end segment, NVIDIA was offering a mid-ranged chip for $899. The cherry on top was the 192-bit memory bus. For comparison, the last 3 80-class GPUs featured a 320-bit/256-bit/256-bit bus respectively. How the community took that is another thing. In the end, we did see this GPU getting canceled.

The question still stands, what went so wrong that for $899, you were supposedly being offered NVIDIA’s 4th best effort? Was it inflation? America’s highest inflation for the year 2022 was recorded in June standing at 9.1% (Source: Statista). A 10% increase still does not justify this price tag. Was it the expensive node? Jensen claims and I quote;

“Moore’s Law is dead…..A 12-inch wafer is a lot more expensive today. The idea that the chip is going to go down in price is a story of the past.”

~ Jensen Huang to PC World’s Gordon Ung

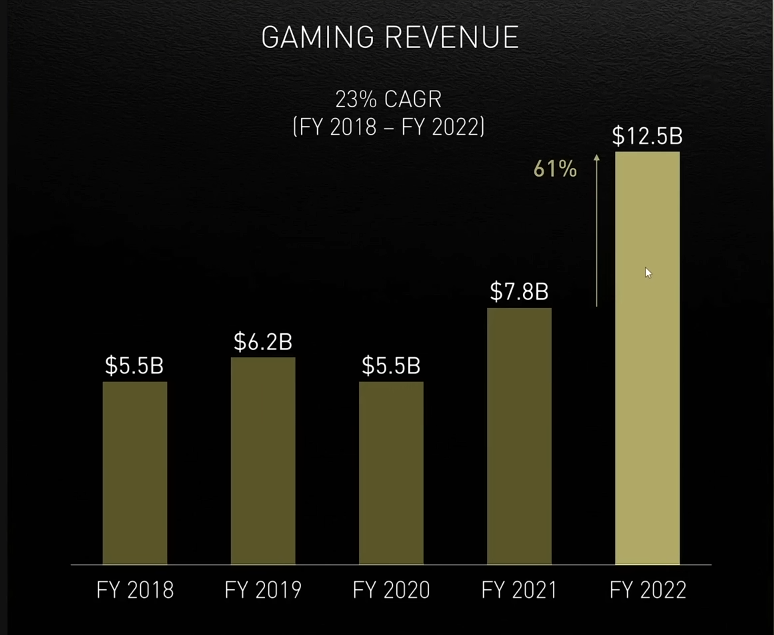

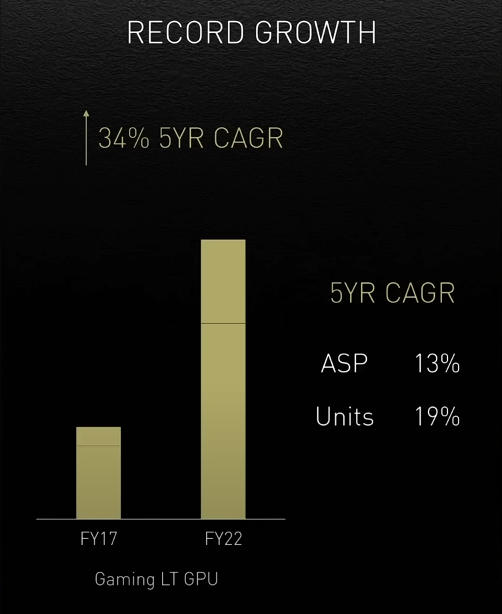

If we put 2 and 2 together, NVIDIA wasn’t simply making enough money due to the increase in chip costs. That surely was not the case at NVIDIA’s Investor day where they proudly mentioned their profit gains due to the increase in GPU prices. It is true that a 12-inch wafer is much more expensive today than it was a while back. But the astronomical increase in prices is in no way near the increase in the wafer cost.

The thing about the GPU market is the company’s current reputation speaks volumes. If we talk about NVIDIA, the company has been the target of mass criticism, and not without a reason. Ada, undoubtedly is much faster than what people anticipated. But the pricing and NVIDIA’s attitude toward the consumers has not been that inviting. When a company straight up tells its consumers that GPU prices are not coming down whereas its competitor launches a product cheaper than last-generation, what can you do to save face in such a situation?

Worth Your Money?

The RTX 4080 is priced at $1199 which as stated above will go higher if you opt for AIB variants. This GPU straight-up destroys the RTX 3090 Ti both in performance and efficiency. However, not many are willing to spend more than $1000 on an 80-class GPU where last generation’s RTX 3080 came in at just $699. The high-end Ampere GPUs apart from the 3090/3090 Ti still have better value than the RTX 4080.

NVIDIA is not competing with itself only, but against RDNA3 as well. AMD so far has delivered and even if the performance is 5-10% slower than expected it will devour NVIDIA’s market share. The thing with AMD is that they’re not aiming for the fastest performance but for the highest price-to-performance ratio.

Without stretching the argument longer than it needs to be, the RTX 4080 is a buy for only those that know what they’re purchasing. If you have the cash and desperately need features like DLSS 3.0 and Cuda performance for workloads, this GPU is worth your money. However, for gamers, AMD’s offerings are worth waiting for. Besides, within a few days, we’ll know if this MSRP of $1199 holds or not because that certainly was not the case with the RTX 4090.

Sources: Linus Tech Tips, Hardware Unboxed, AdoredTV