Nvidia NVLink vs SLI – Differences and Comparison

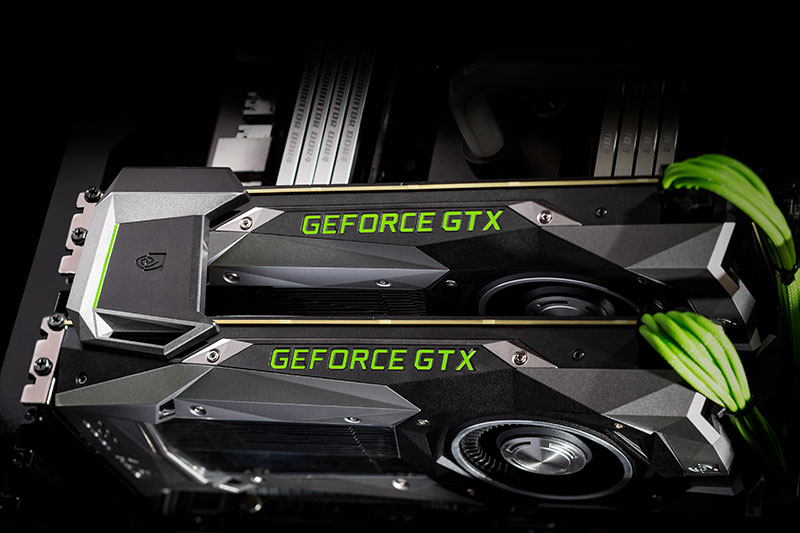

Ever since it became possible to use multiple Graphics cards in a system, we have seen enthusiasts crave beastly machines with 2, 3, or even 4 graphics cards installed in them at the same time. PCs with multiple graphics cards were considered to be the absolute best that the industry had to offer. We even saw multiple GPUs being crammed into the same graphics cards with cards like the Nvidia GeForce GTX 690 and the AMD R9 295×2. But with all its hype and glory, multiple GPU systems never really seemed to take off. Their popularity encountered a quick and abrupt decline soon after the peak of multiple GPU systems. There were several factors involved in this decline, and one of them was the unreliability of the bridging system that was used to connect the graphics cards to each other.

Nvidia’s technique to connect two or more graphics cards with each other was known as SLI which stands for Scalable Link Interface. It was a technology that Nvidia bought from 3dfx Interactive, who introduced it in 1998. Basically, SLI was the brand name for Nvidia’s multi-GPU technology established for linking up two or more graphics cards into a single output using a parallel processing algorithm. Nvidia’s rival AMD also introduced their version of the technology and it is known as Crossfire.

How does SLI Work?

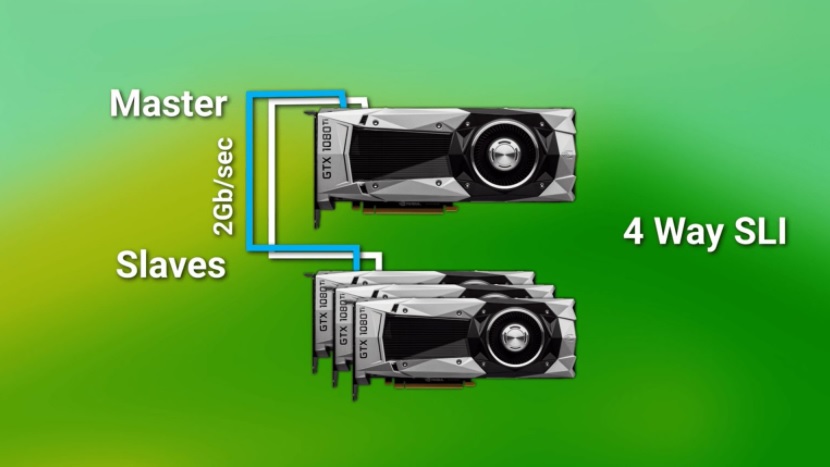

Graphics cards in an SLI system work in a master-slave configuration, which means that one of the cards is assigned the role of “master” despite the load being equally distributed among all cards. When the “slave” card finishes its rendering, it sends its output to the master which then combines the two renders and delivers an output image to the monitor. In a two-card configuration, the master card usually handles the top half of the image while the slave card handles the bottom half.

The two (or more) cards are connected via the SLI Bridge or SLI Connector. The main purpose of the SLI Bridge is to establish a connection between the cards and allow the cards to establish a lane of communication between them. Without the SLI Bridge, although it is possible to run multiple cards, there is a mismatch of specifications and the results are very poor usually. SLI Bridge reduces the bandwidth constraints and can send data directly between the cards. The three types of SLI Bridges are:

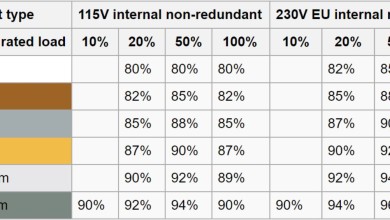

- Standard Bridge (400 Mhz Pixel Clock, 1GB/s bandwidth): This is a standard bridge included with motherboards that support SLI up to 1920×1080 and 2560×1440@60 Hz.

- LED Bridge (540 MHz Pixel Clock): Recommended for monitors up to 2560×1440@120 Hz+ and 4K. It can only operate on an increased Pixel Clock if the GPU supports that clock. It is sold by Nvidia as well as a variety of AIB partners.

- High-Bandwidth Bridge or SLI HB Bridge (650 MHz Pixel Clock and 2GB/s Bandwidth): This is the fastest bridge and is sold exclusively by Nvidia. It’s recommended for monitors up to 5K and Surround. SLI HB Bridges are only available in 2-way configurations so setups with more than 2 cards are out of luck here.

Mechanism of Action of SLI

SLI works using two main methods. One of them is SFR or Split Frame Rendering, in which the system analyzes the rendered image to divide the load between the GPUs that are connected in SLI. The frame is split into two using a horizontal line. The placement of the line depends on the geometry of the scene. The main deciding factor of the placement of the line would be the complexity of load that the scene demands in different areas. If one of the cards is rendering the top portion of a scene which has the sky, then the line would be lower in the image to compensate for the load difference between the two cards.

Another way by which SLI works is AFR or Alternate Frame Rendering which is pretty straightforward. In this method, every other frame is rendered by a different GPU. One of the cards renders all the odd frames and one renders all the even frames. AFR is proven to have a better framerate than SFR, however, it introduces a new form of artifacting known as micro stuttering. Even if the framerate is sufficiently high, micro stuttering can affect the perceived smoothness of the image by introduced mini stutters between frames due to the variable pace at which the cards are operating in AFR.

Rise and Fall of SLI

SLI shot up in popularity during the Kepler, Maxwell, and Pascal series of graphics cards, but ever since then, it has had a constant and drastic decline. Even during the era of the GTX 1000 series of graphics cards from Nvidia, the popularity of SLI was dwindling. Sure, SLI graphics cards can deliver better performance than a single GPU and they even look fantastic when installed inside a case, but there were more problems than advantages to SLI in the first place.

First of all, you had to verify if your motherboard supported SLI. This was a vital step as some motherboards supported SLI, some supported Crossfire, and some supported both while others supported neither. After that, you had to have identical graphics cards. This was a tough one since it made the value proposition really skewed when compared to a better performing single graphics card. The graphics cards had to be the same model and series, and even the same manufacturer earlier on however that was somewhat eased later down the line. Running multiple graphics cards also required a beefy and expensive Power Supply Unit (which you can learn more about right here), and thermals also needed to be monitored and controlled due to several power-hungry cards running in close proximity to each other.

The real Achilles’ heel of the whole SLI ecosystem was the game support. Not all games supported SLI and even those that did, delivered unimpressive gains in performance when taking into account the additional investment. In the majority of the cases, you would have been much better off buying a more powerful, single graphics card rather than 2 weaker ones and putting them in SLI. Over the years, the support for SLI has gotten even worse, with developers rarely giving it a thought in modern games. Finally, the killer blow came from none other than Nvidia itself, who ended support for SLI from the majority of its graphics card lineup. As of the Turing lineup of GPUs, only the best of the best, the RTX 3090, supports SLI. This means that SLI for general consumers is effectively dead as far as gaming is concerned.

What is NVLink?

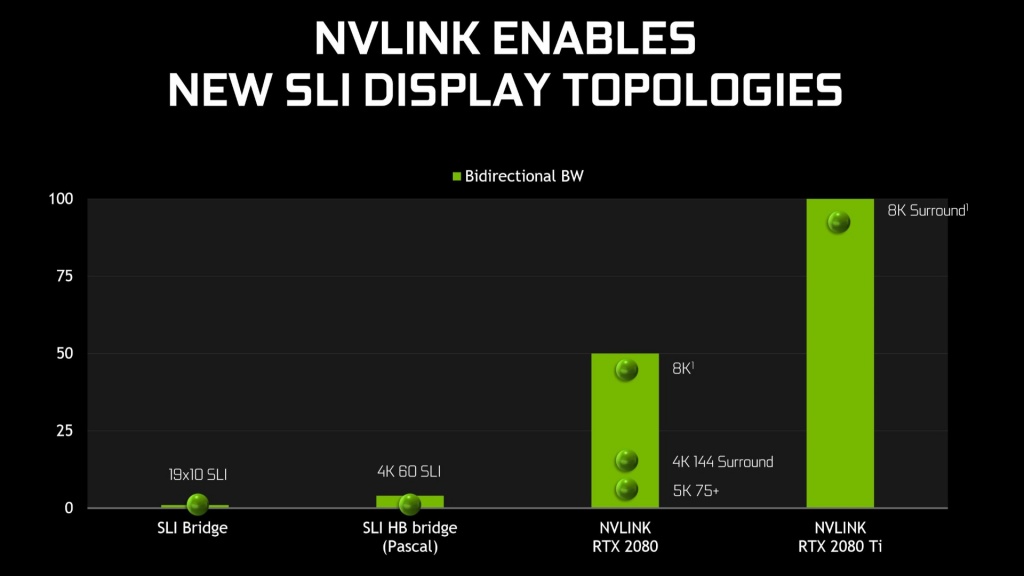

With the Turing lineup of graphics cards, Nvidia brought along a new technology for connecting multiple graphics cards known as NVLink. It is an interface with many times the bandwidth as the old SLI interface and also has many additional quirks and features. Technically speaking, it is a wire-based communications protocol serial multi-lane near-range communication link. Quite a mouthful isn’t it? In more general terms, it is an improved connection interface between multiple Nvidia graphics cards that promises more advantages and features than the old SLI.

NVLink can be thought of as Nvidia’s attempt to claw back the market share of multi-GPUs that has dwindled away in 2020. This technology promises to make gaming using multiple graphics cards a desirable and highly efficient method in the near future. NVLink is essentially a much faster bridge than SLI and aims to close the latency gap between the two graphics cards. Nvidia’s Technical Marketing Director Tom Peterson says the following in this regard:

“That bridge is visible to games, that means that maybe there’s going to be an app that looks at one GPU and looks at another GPU and does something else,” explains Petersen. “The problem with multi-GPU computing is that the latency from one GPU to another is far away. It’s got to go across PCIe, it’s got to go through memory, it’s a real long-distance from a transaction perspective”….”NVLink fixes all that. So NVLink brings the latency of a GPU-to-GPU transfer way down. So it’s not just a bandwidth thing, it’s how close is that GPU’s memory to me. That GPU that’s across the link… I can kind of think about that as my brother rather than a distant relative.”

He further added that NVLink was more of a future plan rather than a current solution:

“Think of the bridge more as we want to lay a foundation for the future,”…” And once that works, and we get our bridges deployed, and people understanding that hey, this is a 100GB/s bridge, then game developers will see that.”

It seems like Nvidia is actually banking on the future of NVLink rather than the present. Nvidia knows that multi-GPU support is fairly disappointing as of right now, so they want to introduce a technology that will not only attempt to change the perspective of the game developers, but also the views of the general consumer in regards to multiple-GPU support.

How does NVLink Work?

Unlike SLI, NVLink uses mesh networking which is a network topology in which the infrastructure nodes connect directly in a non-hierarchical way. This allows the information to be relayed by each node individually rather than getting routed through one particular node. It also allows the nodes to dynamically self-organize which allows for a dynamic distribution of workloads. NVLink gains its main strength from this mechanism which is its speed.

NVLink has also done away with the master-slave relation of the SLI implementation, rather it treats each node equally which tremendously improves rendering speed. Unlike SLI, NVLink has the advantage of having both graphics cards’ memories being accessible all the time. This is due to the incredible mesh network implementation of NVLink. Essentially, the relation of the NVLink cards is bi-directional and the two connected cards act as one.

The speed of the NVLink interface is reflected in the bandwidth of its bridges. While even the best SLI Bridges had a bandwidth of 2 GB/s at best, an NVLink Bridge promises a mind-boggling 200 GB/s in some cases. Granted, that the 160 and 200 GB/s NVLink bridges are restricted to Nvidia’s professional-grade GPUs the Quadro GP100 and the GV100 respectively, but these numbers still are a testament to the incredible leap in the bandwidth of the NVLink interface over SLI. Top tier enthusiast GPUs like the TITAN RTX or 2080Tis can expect a potential bandwidth of a whopping 100 GB/s in most cases.

Is NVLink Worth It?

So has Nvidia’s NVLink finally fixed the problems of multiple-GPU rendering in games? Well, the answer is still no. While NVLink has made some positive strides in the right direction, particularly in the method in which both the cards are leveraged as compared to SLI, the problem with SLI never really was the methodology. The real problem behind the multiple-GPU ecosystem is game support. Even with NVLink, most of the games out there today have a hard time leveraging more than one GPU. Taking into account the cost of two graphics cards, and the little-to-no performance improvement in most scenarios, it would be unwise to invest in NVLink for consumer applications such as games.

For professional work, NVLink might just be the upgrade that was needed. For people working with Nvidia’s Quadro GPUs or cards like the RTX A6000, NVLink can be a big help not only to improve the performance of rendering but also due to the fact that NVLink allows the total memory of both the cards to be available which can be a huge help in professional workloads.

For most gamers though, choosing a single graphics card that offers more performance out-of-the-box is a better idea than buying two weaker cards and connecting them via NVLink or SLI for that matter.

Final Words

Nvidia’s SLI was an interesting and attractive technology when it was first launched and soon gained popularity due to its potential and somewhat decent support from developers during the Maxwell and Pascal era. However, SLI’s peak was short-lived as the industry swiftly moved forward and SLI was left in the past with both the developers and the gamers abandoning the technology in favor of single, more powerful GPUs.

Nvidia tried to reinvent the market of multiple GPUs by launching NVLink which is a much-improved connection over SLI, but still, it fails to solve many of the issues that plague SLI in the first place. This makes it a hard recommendation in 2020 for gamers when more powerful, single graphics cards can deliver a better experience reliably, and at a cheaper cost.

SLI from 3DFx days stands for “scan line interleave”, which is exactly what the 3DFx technology did.

The term you apply, i.e. scalable link interface, is newer and more of an Nvidia marketing term to distinguish their product from the Voodoo.

You won’t find that newer descriptor in any of the 3DFx materials describing their technology.

This technology will be a boon to VR users, though, when the ability to use individual video cards to support each VR screen separately eventually matures: aka VRWorks or LiquidVR.