NVIDIA Announces Grace-Hopper GH200 Superchip With 282GB of HBM3e Enabling 10TB/s of Combined Bandwidth & 3x Faster Memory Bandwidth

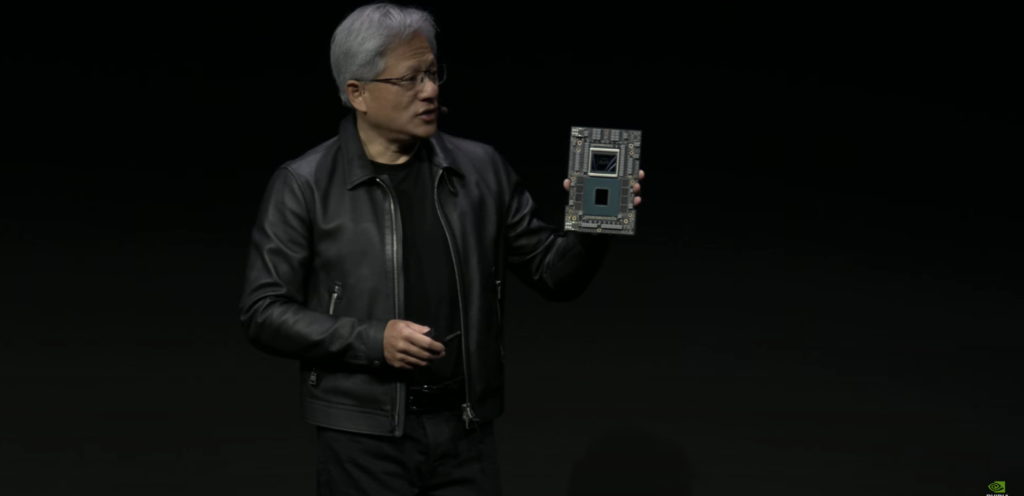

Jensen Huang unveiled the Grace Hopper super chip codenamed GH200 in May. The Grace-Hopper super chip is basically a CPU and a GPU on a single package. NVIDIA has decided to give the GH200 an upgrade with HBM3e memory. This enables the package to achieve 3.5x more memory capacity and 3x more bandwidth than the current offering.

NVIDIA Announces GH200 With HBM3e

We all know about the Hopper side of things, with NVIDIA’s H100. Since the AI boom, the H100 has found itself in a pretty good position with 80 Billion transistors and 80GB of memory. To compete against AMD’s Instinct MI300X, NVIDIA announced Grace Hopper a few months back. This offering sees a boost and will now arrive with the industry-leading HBM3e.

Both CPU and GPU are connected via NVIDIA’s NVLink-C2C technology. This allows a CPU-GPU bandwidth of 900GB/s, much much faster than traditional PCIe Gen5 lanes.

The upgraded GH200 boasts 144 Arm Neoverse cores, an 8 PetaFLOPS Hopper GPU and 282GB of HBM3e. The Grace CPU is equipped with ~600GB of LPDDR5X memory. In a dual configuration, the total memory accessible comes out to be 1.2 TeraBytes.

“To meet surging demand for generative AI, data centers require accelerated computing platforms with specialized needs,” said Jensen Huang, founder and CEO of NVIDIA. “The new GH200 Grace Hopper Superchip platform delivers this with exceptional memory technology and bandwidth to improve throughput, the ability to connect GPUs to aggregate performance without compromise, and a server design that can be easily deployed across the entire data center.”

Jensen Huang

HBM3e is almost 50% faster than HBM3, as such NVIDIA is able to achieve 10TB/s of combined bandwidth.

Release Date

NVIDIA says that leading system manufacturers are expected to announce systems based on the GH200 super chip sometime in 2024. To be more specific, we the mentioned timeframe is around the 2nd quarter.

Source: NVIDIA