China’s MTT S80 Unboxed & Reviewed by Linus Tech Tips, Slower than the 30W GT 1030

Over the past few months, we have seen more and more information and tests regarding China’s MTT S80 GPU. To give some context, this is a fully home-grown and in-house Chinese product developed by Moore’s Threads. Back in November, the GPU was up for purchase at various Chinese outlets. Today, Linus Tech Tips has put this GPU to the test and oh boy, it performs well, weirdly.

Moore’s Threads MTT S80 Unboxed by Linus

Getting this GPU was a challenge in itself. Linus Tech Tips called Moore’s Threads for a review various times, however, they did not respond. The initial wave of GPUs was handed out to users through a ‘lottery system’, making it quite difficult for anyone outside China to get one.

So, where did Linus buy his GPU from? An unnamed user sent their GPU to Linus Tech Tips, who well, did the complete teardown and breakdown of the MTT S80.

The Power Solution

On unboxing the GPU, Linus found that the MTT S80, rather than using a standard PCIe power connector, uses an adapter that goes from 2x PCIe 8-pin to an 8-pin EPS. The EPS connector is actually used to supply power to a CPU, not a GPU.

Linus further clarifies that this is more often seen with server setups and not mainstream desktop GPUs. Technically, you can power this GPU using the 8-pin EPS connector as it surprisingly offers 336W of max power.

Additionally, Moore’s Threads have a server-oriented GPU called the MTT S3000 which uses an EPS connector. To ‘probably’ simplify the design and do as little as possible, the company just slapped on the same power delivery solution to its mainstream MTT S80.

Modern Ports

On the back of the GPU, we can find 3x DisplayPort 1.4a and 1x HDMI 2.1 port making the S80 fairly up-to-date. I mean, you would expect that, given that this GPU is the industry’s first to support the PCIe Gen 5 protocol (which really doesn’t mean much ‘for now’).

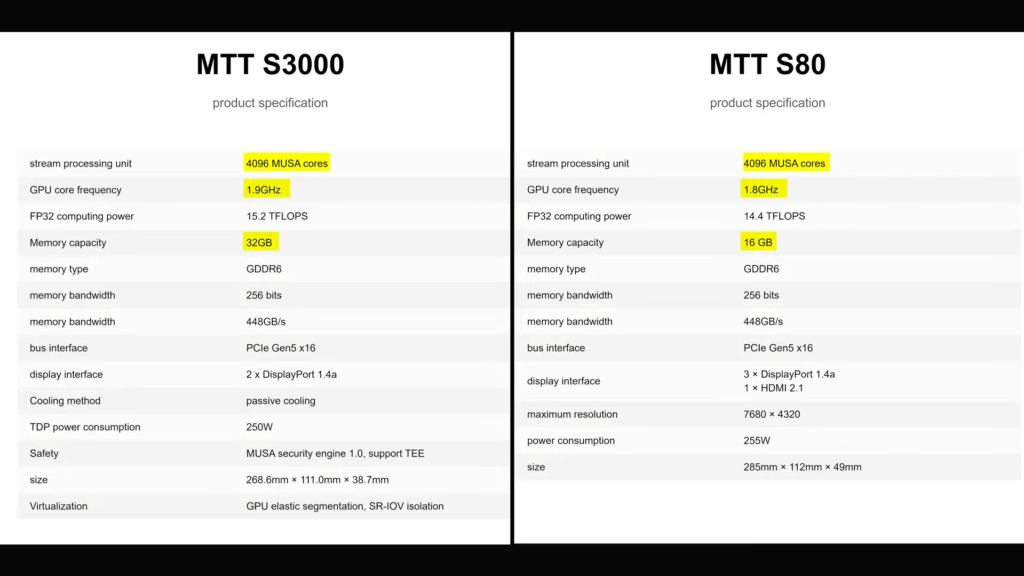

Specifications

Let’s go over the specifications now. The Moore’s Threads MTT S80 features 4096 Shading Units or MUSA cores. The GPU runs at 1.8GHz, which is a respectable number alongside the 16GB of GDDR6 VRAM capacity. It can ‘handle’ video formats including AV1, H.264, H.265, and VP9, and supports HDR10 processing and playback.

Over a 256-bit memory bus, the 16GB (14Gbps) memory amounts to 448GB/s of effective bandwidth. Theoretically, it lies somewhere between an RTX 3060 and an RTX 3060 Ti, but remember we used the word ‘theoretically. Furthermore, this GPU has built-in AI accelerators like NVIDIA’s Tensor cores, though it is not certain what these cores are intended for.

First Boot

Surprise! The GPU boots into Windows. And there’s another catch to how this GPU even started. The MTT S80 only supports 17 motherboards and is often included as a bundle-only product. Luckily, Linus had one of the listed motherboards so keep this in mind if you plan on buying this GPU.

Moving over to the settings, the GPU does not support HDR and is working at 4K 120 Hz. The encoder supplied with this GPU is not recognized by OBS so that’s a bummer. You might have to resort to Google Lens since the entire software package for the S80 is in Chinese, well unless you know Chinese.

Gaming Benchmarks

Before we get into the benchmarks, we all know how this GPU will perform so let’s keep our expectations really low. Besides, we have already seen reviewers put this card to the test and the result was….bad.

Shadow Of The Tomb Raider

The GPU worked completely decently and was able to provide ample FPS until we actually opened the game. And those ample framerates we were talking about, well that was the settings menu that pops up before you start Shadow of the Tomb Raider. And what happens when we actually start the game? It freezes. That’s it.

What gives? Basically, the S80 only supports a few DX9 games and even fewer DX11 games thanks to its lack of Tesselation support. Why don’t we try something that works?

League of Legends

Moving over to everyone’s favorite MOBA (sorry Dota 2 fans), the MTT S80 used by Linus was put up against a fierce competitor. Meet the budget gamer’s haven, the GTX 1660 (non-Super). Paired with a CPU that ranks (probably) in the top 5 fastest gaming CPUs, we expected a lot from both setups.

Due to the lack of an overlay (anti-cheat reasons), the game was described as ‘working fine’ at medium and very high settings. And this was at 4K. Apparently, there were no graphical issues like artifacts so, that’s a win for Moore’s Threads.

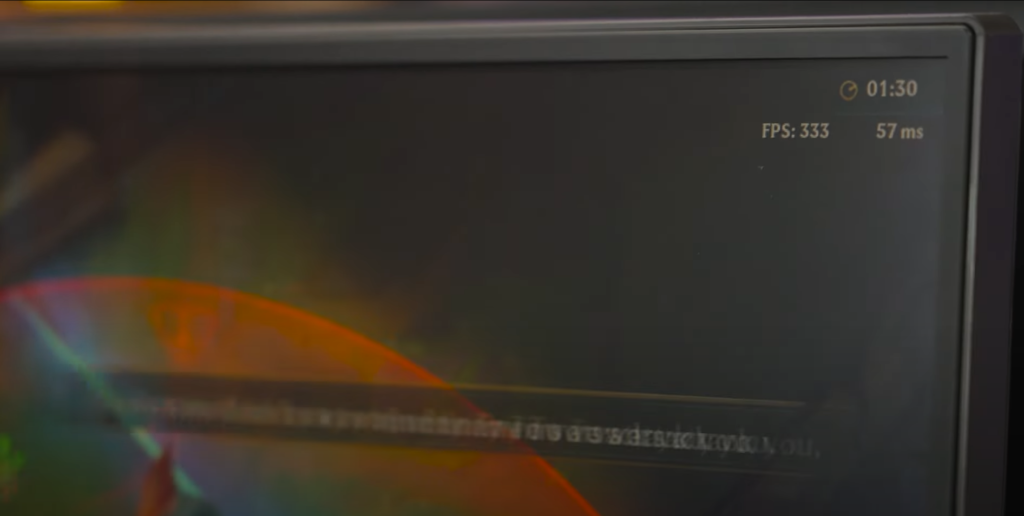

Using LoL’s built-in FPS counter, the game was running at 300–400 FPS using the very high preset. Nice! But sorry for being the party pooper, the GTX 1660 was getting 1000 FPS. Linus terms the experience ‘1/8th of the performance, at 2x the power consumption’.

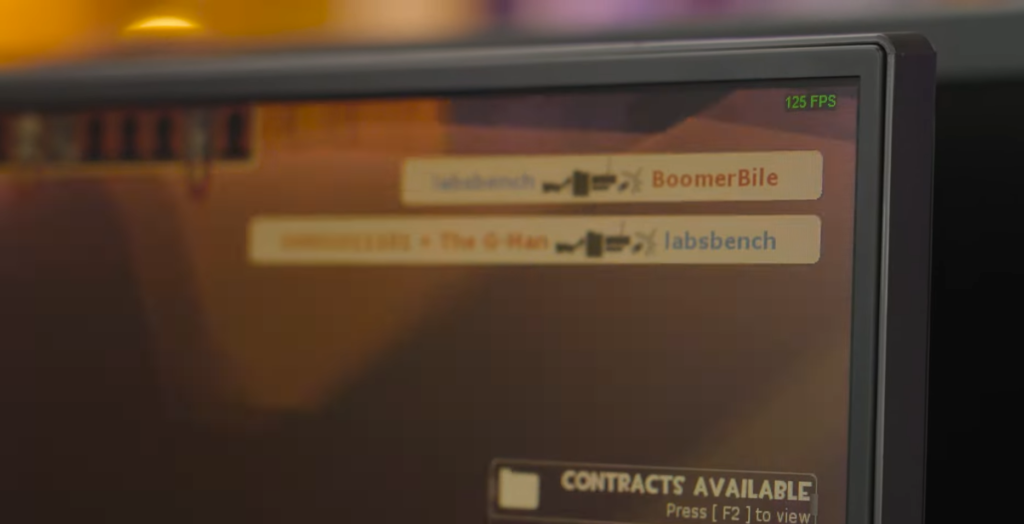

CS: GO

Who said 30 FPS was not playable? It is, provided it’s a constant 30 FPS experience. The S80 struggles to run CS: GO as the frametime graph is all over the place. You’d definitely not clutch the 1v5 with this setup. On the contrary, the GTX 1660 PC scores around 160–200 FPS, you get the idea.

This is the same score you’d get with the GT 1030, which is a 30 Watt GPU and can be had for as low as $30–$40 used. As much as that should surprise us, it really doesn’t, but it sure did surprise Linus;

Team Fortress 2

Team Fortress 2 ran from anywhere between 70-100 FPS. Impressive! So you can enjoy TF2 with your buddies using this GPU, but sadly this is nothing compared to even dated mid-ranged GPUs.

Other Games?

When we go outside the ‘supported games’ territory, the GPU simply fails to open any other title. I mean, it is impressive that it is even able to play certain titles, but I personally wouldn’t recommend this to any of our readers, unless you get it for free though.

The GPU’s Internals

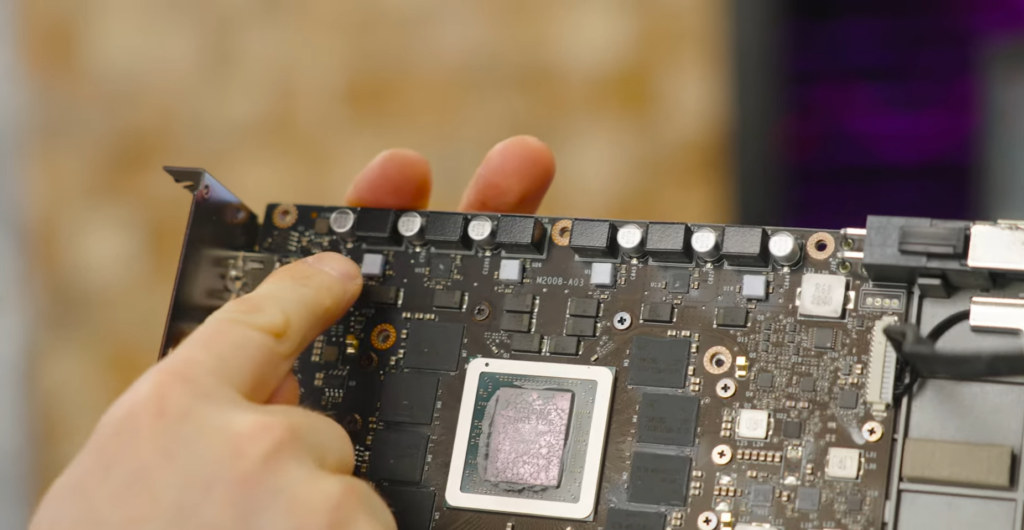

The 8-pin EPS connector we talked about above, goes through a 4-pin connector. And yes, it does draw 250W of power, but that’s with the help of the extra 75W you’d get with the PCIe slot.

The VRMs or Voltage Regulator Modules are built on top of the GPU board, which is quite surprising. The GPU die is surrounded by 8 VRAM chips (2GB each) labeled ‘Samsung’.

And here’s the SD102AA-500 die used for this GPU. Looks beautiful, doesn’t it? I mean all GPU dies look beautiful, but this has a slight hint of red.

Synthetic/AI Performance

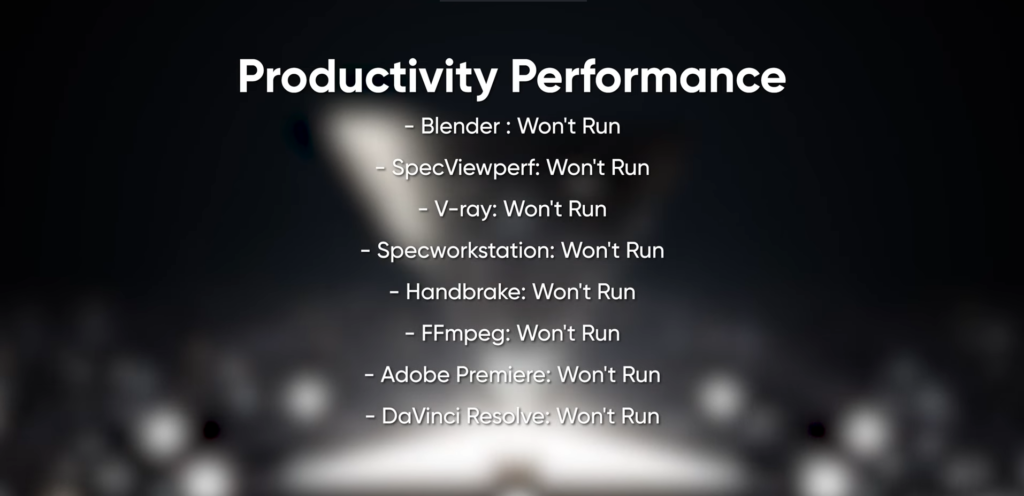

It is obvious that this GPU would have its uses in AI accelerator and synthetic performance, if not in gaming. How does it perform? The image below can give you a clear idea that this GPU is victim to some serious problems, probably on the software level.

Conclusion

While China’s first few GPUs that can compete against the current tech are still far off into the future, we will eventually see them. It is a good thing to have more competitors stepping into the semiconductor field. When Intel Arc first launched, people were skeptical of those GPUs, however, the community is now pumped up for Battlemage.

Do you think China will be able to launch its first bleeding-edge processor in the next 10 years? Tell us in the comments below.